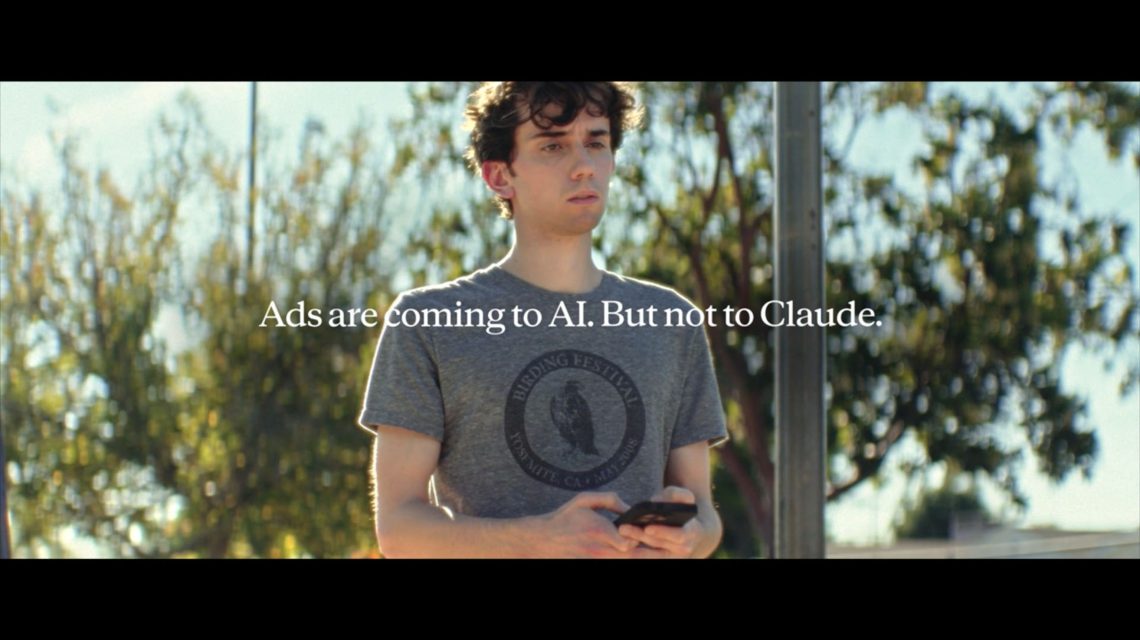

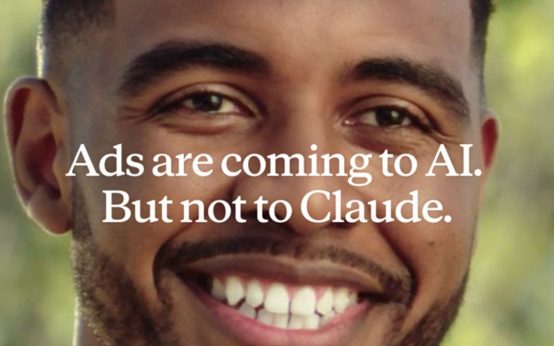

The world of artificial intelligence (AI) is stepping onto one of the biggest stages of the year—Super Bowl LX—through an ad campaign by Anthropic. This innovative company offered an exclusive preview of its inaugural Super Bowl advertisement on “Good Morning America” this past Wednesday, stirring curiosity and engaging the media.

The ad brings a humorous twist, showcasing the AI assistant Claude, emphasizing that the tool does not come with any ads. The scene unfolds with a man conversing with what seems to be a therapist, asking for advice on enhancing communication with his mother. The AI, personified in the ad, offers advice, “Great question. Improved communication with your mom can bring you closer.” However, this sincere moment turns comical as the AI humorously suggests, “Or, if the relationship can’t be fixed, find emotional connection with other older women on Golden Encounters, the mature dating site that connects sensitive cubs with roaring cougars,” leaving the man in confused amusement.

The ad reinforces Anthropic’s commitment to maintaining ad-free interactions within its AI technology. Daniela Amodei, president of Anthropic, clarified in an interview with ABC News, “This really isn’t intended to be about any other company other than us. People are sometimes uploading private or confidential information to their AI tool, and to us, it just didn’t feel like the respectful way to treat our users’ data.”

Anthropic, founded in 2021 by Daniela Amodei and her brother Dario after departing from OpenAI, has long been an advocate for safety in AI, especially concerning the younger audience. The company has set a precedent by not allowing users under the age of 18 to interact with their AI assistant.

In addressing the complexities of raising children amidst the advancements of AI, Daniela Amodei shared, “Probably like most parents, I feel a mixture of things. When I look at my kids, I think, ‘Wow, it would be amazing if this technology just enabled them to live healthier, happier lives.’ However, I think on the other side, there’s still a lot of work for us to do from a societal perspective to make sure that we’re developing the technology thoughtfully, safely.”

The company’s proactive stance on safety extends to its co-founder Dario Amodei, who recently penned a notable essay, “The Adolescence of Technology”, outlining potential risks and solutions for AI’s rapid growth. He voiced concerns, “I think it should be clear that this is a dangerous situation,” urging society to be vigilant.

Discussing the need for safety measures, Daniela emphasized the company’s transparency and willingness to openly address how they are working to reduce risks. This openness, she believes, could eventually pave the way for effective regulation. She noted, “As more young people begin to interact with AI chatbots, and with parents worried about their children’s relationships with them, this is a topic that I think, frankly, the industry as a whole has just not been talking about and grappling with enough.”

Anthropic has also taken steps towards advocating for regulations that safeguard children. Daniela mentioned that the company has already participated in discussions at the state level in California and New York concerning these issues: “We’re excited about the potential for this at the federal level as well.”

Wearable Devices as Wellness Tools: Insights and Implications

Wearable Devices as Wellness Tools: Insights and Implications  Pentagon Releases Files on Unidentified Anomalous Phenomena

Pentagon Releases Files on Unidentified Anomalous Phenomena  Shift in Autonomous Vehicle Technologies: New Applications Emerging

Shift in Autonomous Vehicle Technologies: New Applications Emerging  AI Rivalry Heats Up with Anthropic’s Bold Super Bowl Ad Campaign

AI Rivalry Heats Up with Anthropic’s Bold Super Bowl Ad Campaign  Pivotal’s Revolutionary Helix: Navigating the Future of Flying Cars

Pivotal’s Revolutionary Helix: Navigating the Future of Flying Cars  Beats Earbuds and Headphones: Current Deals and Features

Beats Earbuds and Headphones: Current Deals and Features